ARCHIVE NEWS

September 2025

Middle Temple and the World: A Molyneux Globes Study Day

5 SEPTEMBER 2025

Find out how early modern Europeans saw the world in this exciting study day, taking place at Middle Temple Library on Staturday,11th October.

READ MOREApril 2025

St Bride Foundation Wayzgoose 27 April

17 APRIL 2025

Our friendly neighbours and inky co-conspirators at St Bride Foundation invite us to join in their annual celebration of all things print-related!

The tradition of the wayzgoose as a key date in the printers' calendar is first mentioned in 1683. Joseph Moxon devoted a whole chapter of his Mechanick Exercises: ... Applied to the Art of Printing to the 'Customs of the Chapel', as the association of journeymen in a printing house was known. Chief among these was the annual feast, aka wayzgoose or waygoose, given by the Master Printer to the workers, by all accounts a riotous and carnivalesque occasion. Moxon notes 'These Way-gooses, are always kept about Bartholomew-tide [24 August]. And till the Master-Printer have given this Way-goose, the Journey-men do not use to Work by Candle Light.' It's a tradition that has persisted in the printing community, with some modifications for modern sensibilities (not to mention health & safety concerns). The St Bride Foundation Wayzgoose kicks off the festive season well before St Bartholomew's Day, and promises to be an unmissable event. Check out their invitation below.

READ MOREJanuary 2025

Registered Designs, 1839-1991: A workshop at the National Archives, 5th March

9 JANUARY 2025

A practical training session at the National Archives offers a look at design copyright records.

Lead image: BT 43/187 range of design numbers from 3496 to 3541: Twelve textile designs registered by Thomson Brothers and Sons in 1843. All images taken from the National Archives, Collection BT/43 Patents, Designs and Trade Marks Office and predecessor: Ornamental Design Act 1842 Representations

READ MOREMarch 2024

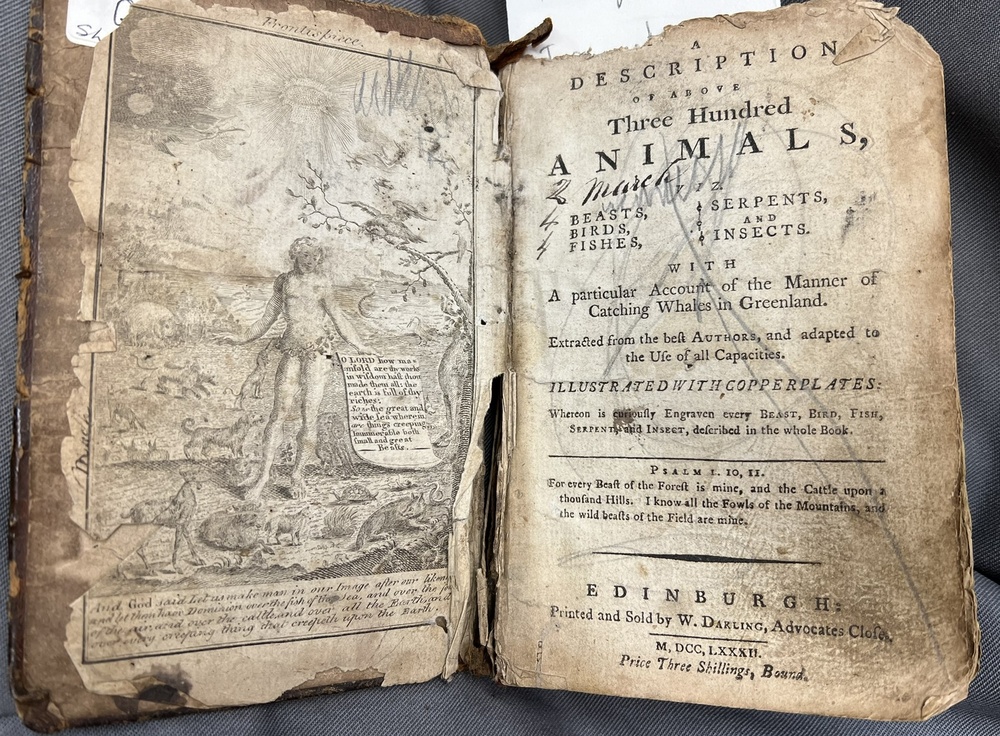

'Steal not this book for fear of shame': a hidden gem in the Stationers' collections

20 MARCH 2024

Postgraduate researcher and archive intern Beth DeBold uncovers one of the treasures of the Stationers' library, an eighteenth century children's book which found its way to the Hall all the way from Cumbria.

READ MOREFebruary 2024

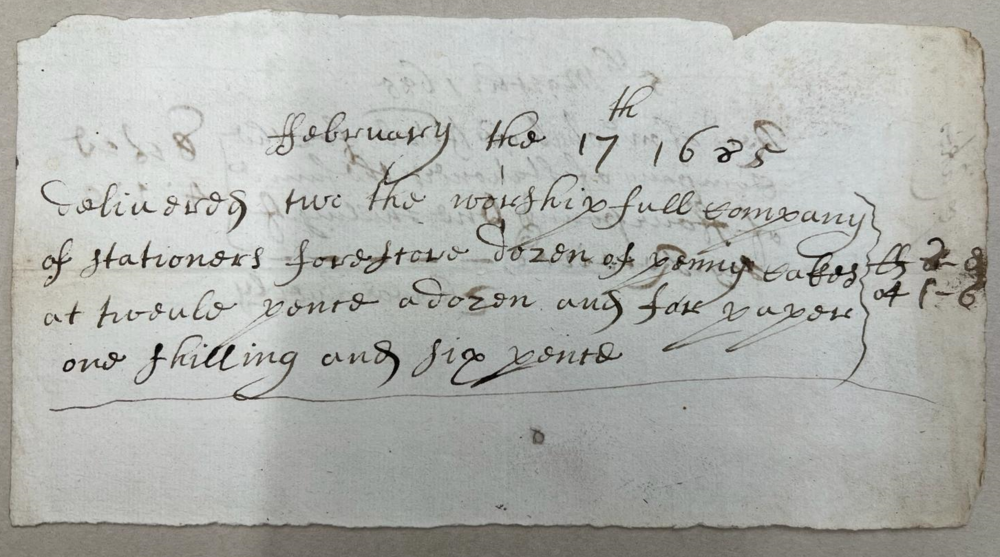

A History of Cakes and Ale

9 FEBRUARY 2024

February 13th is Shrove Tuesday - which for Stationers means celebrating the centuries' old tradition of Cakes and Ale, established by bookseller John Norton in 1613. Here archive intern Beth Debold explores the history of this tradition.

Main image: Delivery note for baker Thomas Averley's 'penny cakes' in preparation for Cakes and Ale, 1685. Stationers' Company Archive, TSC/D/11/05

READ MORE

December 2023

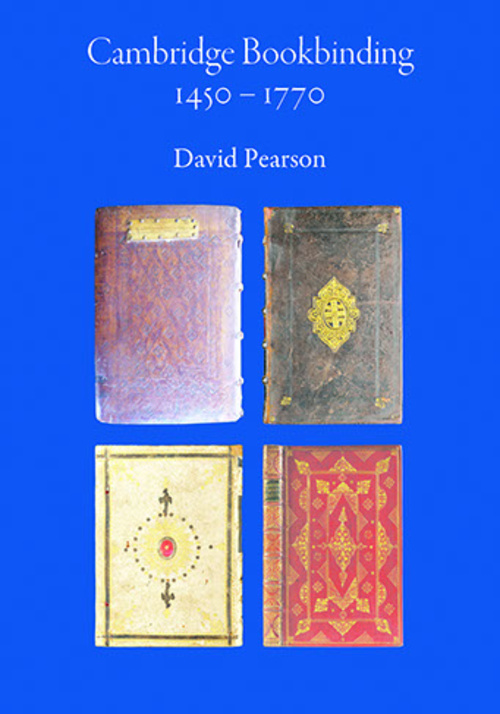

Cambridge Bookbinding 1450-1770

15 DECEMBER 2023

A fascinating new book by Liveryman Dr David Pearson, accompanied by a series of lectures, explores the history of book-binding in Cambridge.

READ MORE

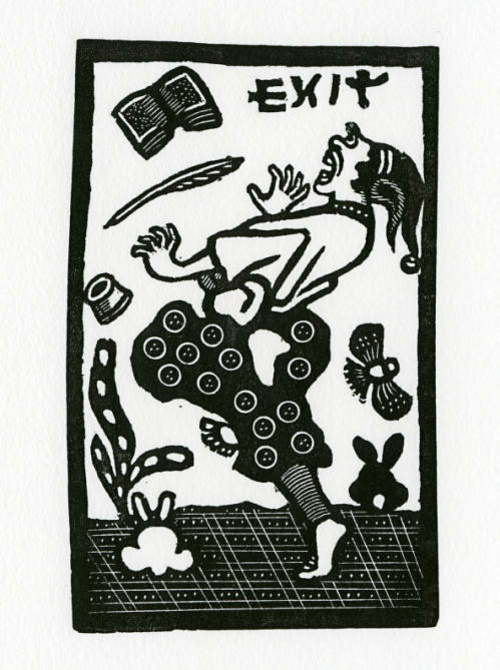

'New directions in the study of the Book Trades '- call for papers

4 DECEMBER 2023

Interested in exploring new research avenues in the history of print? Want to contribute to the discussion? Then check out the call for papers for 2024's annual Print Networks/Centre for Printing History Conference, Unfinished Business: Progress, Stasis and New Directions in the study of the Book Trade since Peter Isaac, Newcastle University, 9-10 July 2024.

All images on this page: ‘Print taken from an original Joseph Crawhall II woodblock’, Crawhall (Joseph II) Archive, Special Collections, Robinson Library, Newcastle University, UK

READ MOREJune 2023

A special visit to the Archive...

28 JUNE 2023

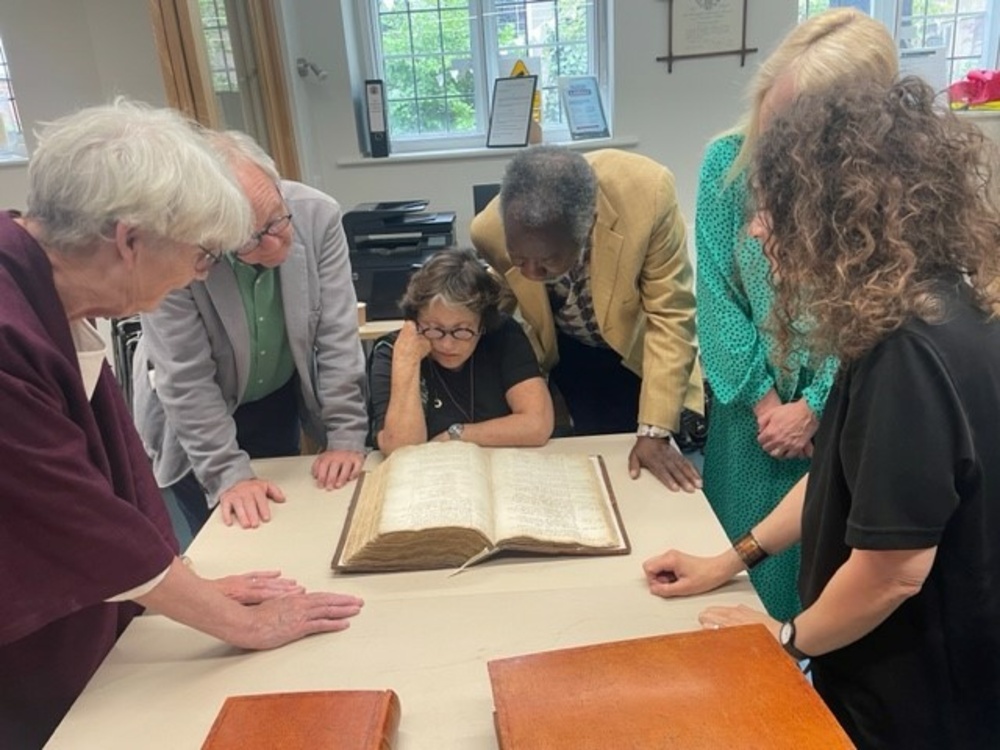

A very special visit from stars of the stage and screen Claire Bloom, Joseph Mydell and Bruce Alexander was the highlight of the week for the Stationers’ Company Archive.

Main photograph: Clustered around the Stationers' Register entry for Shakespeare's Folio are (l-r) Liverman Margaret Willes, Bruce Alexander, Claire Bloom, Joseph Mydell, Master Moira Sleight, and archivist Ruth Frendo

READ MORE

A visit to the Royal College of Music Museum

20 JUNE 2023

On June 13th, Court Assistant Carol Tullo and I visited the Royal College of Music Museum. Carol, who is the current Chair of the Library and Archive Committee, organised the meeting through the Musicians’ Company Junior Warden The Hon Richard Lyttelton, after last February's joint event The Shape of Music Copyright re-established closer working links between our two Companies. On the day, we were hosted by Stephen Johns, Artistic Director of the RCM, and Gabriele Rossi Rognoni, Museum Curator and Chair of Music & Material Culture.

READ MOREMay 2023

Queen's College First Folio comes to Stationers' Hall

25 MAY 2023

On Thursday 18th May, Stationers’ Hall was host to a very special guest: an edition of Shakespeare’s First Folio which once belonged to the great eighteenth-century actor and theatre manager David Garrick.

Main image shows Queen's College Librarian Dr Matthew Shaw and Court Assistant Professor Tim Connell with the First Folio. Photograph © Ben Broomfield for Queen's College Oxford

READ MORE

May events at St Bride Foundation

9 MAY 2023

Stationers and anyone with an interest in print history will be excited to learn of two upcoming events at St Bride's Foundation. On Thursay 11th May, at 7-8.30pm, designer and author Marcin Wichary will give a talk on the history and creative potential of typing keyboards. And on Thursday 25th May, 7-8.30pm, representatives of five printing institutions from across Britain and Ireland get together to discuss their histories and collections. For full details, see below.

READ MORE

A tribute to Robin Myers

4 MAY 2023

The death of our Honorary Archivist Emeritus, Robin Myers MBE, on Monday, 1 May 2023, was a huge blow to the loyal community of Stationers, historians, archivists and friends which grew up around her during her long and active life. It’s fair to say that, without her tireless promotion of the Stationers’ Company Archive, this blog wouldn’t exist, so today we’re taking a moment to remember her remarkable contribution.

READ MORE